When a Gaming Console Outperformed the System

There are moments in technology when innovation does not come from complexity, but from perspective. The story of the Condor Cluster, a supercomputer built by the U.S. Air Force Research Laboratory using PlayStation 3 consoles is one of those moments. It is not merely a curiosity or a clever engineering workaround. It is a case study in cost efficiency, unconventional thinking, and the quiet intersection between consumer technology and national security infrastructure.

In 2010, while governments and research institutions around the world were investing tens of millions of dollars in highly specialized supercomputing systems, the U.S. military pursued a different path. Instead of procuring a traditional high-performance computing system, the Air Force assembled a machine using 1,716 PlayStation 3 units, combined with graphics processing units and conventional server processors. The result was the Condor Cluster, a system capable of delivering approximately 500 trillion floating-point operations per second.

At the time, this was not just impressive. It was strategically meaningful. The fundamental insight behind the project was not accidental. The PlayStation 3 was powered by the Cell Broadband Engine, a processor co-developed by Sony, IBM, and Toshiba. Unlike traditional CPUs, the Cell architecture was designed to handle parallel workloads at scale, distributing computational tasks across multiple specialized processing elements. This made it uniquely suited for the type of data-heavy, parallelizable problems that define modern defense and intelligence operations.

What Sony designed for rendering graphics, physics, and gameplay dynamics turned out to be equally valuable for analyzing radar signals, processing satellite imagery, and running pattern recognition algorithms. This is where the Condor Cluster becomes more than a technical anecdote. It becomes a reflection of how innovation often emerges not from new inventions, but from the recontextualization of existing tools.

From a financial standpoint, the implications were profound. The total cost of the Condor Cluster was approximately two million dollars. At the time, a traditional supercomputer delivering comparable performance would have required an investment estimated between fifty and eighty million dollars. The difference is not marginal. It is structural. This delta is what separates incremental efficiency from strategic advantage.

The use of off-the-shelf consumer hardware allowed the Air Force to bypass the long procurement cycles typically associated with specialized computing infrastructure. It also introduced a level of modularity and scalability that traditional systems struggled to achieve at the same cost point. Instead of relying on a monolithic architecture, the Condor Cluster was inherently distributed, built from hundreds of independent yet interconnected units.

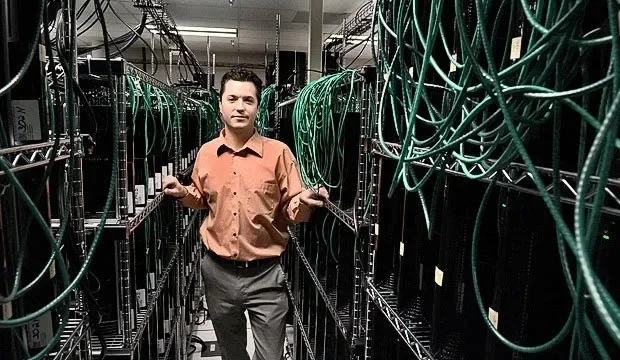

Physically, the system was as unconventional as its conceptual foundation. Miles of cabling connected the individual consoles, forming a dense computational fabric. Each PlayStation was a node, contributing its processing power to a larger, coordinated system. The image is almost symbolic: a network of gaming devices, originally designed for entertainment, repurposed into a machine capable of supporting national defense applications. Yet, beyond the numbers and architecture, there is a deeper layer to this story.

The Condor Cluster represents a moment when the direction of technological influence briefly inverted. Historically, many of the technologies that shape civilian life, GPS, the internet, advanced materials, originated within military or government-funded research. In this case, however, the military leveraged a product born in the consumer market, recognizing that innovation had already occurred elsewhere. This inversion is not trivial. It signals a shift in where cutting-edge capabilities can emerge.

By the late 2000s, the scale of investment in consumer electronics, particularly in gaming, had reached a level where companies like Sony were effectively pushing the boundaries of computational performance in ways that rivaled traditional high-performance computing vendors. The gaming industry, driven by competition and mass-market demand, was optimizing for performance-per-dollar at a pace that institutional procurement could not easily match. The Condor Cluster was, in essence, a recognition of that reality.

It also highlights a broader principle that extends far beyond computing: efficiency is not always about building better systems, but about recognizing where value already exists. The ability to identify underutilized capabilities, and to recombine them in meaningful ways is often what defines strategic thinking in both business and government. There is, however, a temporal dimension to this story.

The viability of using PlayStation 3 units in this way depended on a specific moment in the product’s lifecycle. Early versions of the PS3 allowed users to install alternative operating systems, including Linux, which made them accessible for research and computational purposes. This capability was later removed in subsequent updates, effectively closing that window of opportunity. In other words, the Condor Cluster was not just innovative. It was opportunistic.

It captured a fleeting alignment between hardware capability, software flexibility, and cost structure. Such alignments are rare, and they do not last. This is perhaps the most important takeaway.

Technological advantage is often transient. What matters is not only the existence of opportunity, but the ability to recognize and act on it before it disappears. The Air Force did not invent the PlayStation 3, nor did it design the Cell processor. What it did was identify a moment when those technologies could be repurposed in a way that delivered disproportionate value. That is strategy in its purest form.

Today, the Condor Cluster is no longer at the frontier of computing performance. Advances in GPUs, cloud computing, and specialized AI hardware have redefined what is possible. Yet the lesson remains intact. In an era where capital efficiency and speed of execution are increasingly decisive, the ability to leverage existing systems, rather than defaulting to bespoke solutions, is more relevant than ever.

For companies, investors, and policymakers, the message is clear.

Innovation does not always require invention. Sometimes, it requires the discipline to look at what already exists and ask a different question.

Not “what is this designed to do?”

But “what else could this become?”